Disclaimer – the alternative title is the start of one of my favorite jokes – in the interests of keeping this post PG-13, I’ll post the punch line at the end.

Disclaimer – the alternative title is the start of one of my favorite jokes – in the interests of keeping this post PG-13, I’ll post the punch line at the end.

It never ceases to amaze me how selectively paranoid we are as a society. I know I’m not alone in avoiding certain behaviors because they seem too risky – I always wear my seatbelt (even in a pick-up driving at 10 mph through a pasture), I don’t put my phone in my lap (who knows what invisible radio waves are frying my internal organs?) and I’m convinced that if I eat a Twinkie (RIP?) it’ll instantly turn me into 400 lb couch potato. Yet I also drive too fast on the interstate, drink enough coffee to keep a polar bear wired for days and have the misguided impression that I can survive on 4 hours sleep per night (thank you NCBA Cattle Industry Convention 2013 for proving me right last week). There’s no doubt that I’m more likely to come to harm from the latter set of behaviors than the former, so why the dichotomy?

It appears to comes down to two main factors:

- The perception of relative risk – am I more likely to be injured from driving fast or from not wearing a seatbelt?

- The extent of our knowledge about the subject – I know what risks come with caffeine consumption and I accept them in exchange for improved work productivity, but who knows how addictive Twinkies really are? There’s a reason they’re sold in multi-packs…

Thanks to the preponderance of media articles and books about food production, we’re more educated as a society than we were 10 years ago, yet we still fail to understand the concept of relative risk:

- Environmentally, the Meatless Mondays campaigns appear to make people feel good about saving the planet even as they drive their Hummer to Whole Foods to buy quinoa and kale salad for dinner

- Socially, reusing grocery bags reduces waste, yet appears to come with a far higher risk of contracting E. coli (thank you David Hayden)

- Healthwise, I have lost count of the conversations I’ve had with highly educated, health-conscious women who have stopped feeding beef or milk to their kids because of the hormones used in beef or dairy production. Yet this is one area where we have a huge amount of data, we just need to put it in context.

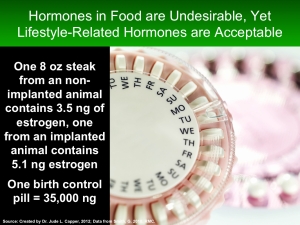

Yes, an 8-oz steak from a steer given a hormone implant contains more estrogen than a steak from a non-implanted animal. 42% more estrogen in fact. That’s undeniable. Yet the amount of estrogen in the steak from the implanted animal is minuscule: 5.1 nanograms. One nanogram (one-billionth of a gram or one-25-billionth of an ounce) is roughly equivalent to one blade of grass on a football field.

Yes, an 8-oz steak from a steer given a hormone implant contains more estrogen than a steak from a non-implanted animal. 42% more estrogen in fact. That’s undeniable. Yet the amount of estrogen in the steak from the implanted animal is minuscule: 5.1 nanograms. One nanogram (one-billionth of a gram or one-25-billionth of an ounce) is roughly equivalent to one blade of grass on a football field.

By contrast, one birth-control pill, taken daily by over 100 million women worldwide, contains 35,000 nanograms of estrogen. That’s equivalent of eating 3,431 lbs of beef from a hormone-implanted animal, every single day. To put it another way, it’s the annual beef consumption of 59 adults. Doesn’t that put it into perspective?

If birth-control is a sensitive subject, let’s compare it to vegetables: one 8-oz serving of cabbage = 5,411 nanograms of estrogen, over 1,000 times more estrogen than the same serving size of steak from a steer given a hormone implant. Yet Huffington Post, TIME magazine et al. aren’t up in arms about the dangers posed by cabbage consumption (NB. ~4,000 cabbage producers in the USA, please don’t send me hate mail, this is just an example).

Hormones are directly or indirectly responsible for everything that we do each day, from waking up to going to sleep, from the mundane to the life-changing. Yes, they are an intrinsic part of childhood development, yet the earlier ages at maturity we’re currently seeing in children have been attributed to increased levels of body fat (i.e. childhood obesity), not to exogenous hormone consumption. I’m not downplaying the consequences that hormones have on our long-term health and survival, just asking for a little balance – after all, where’s the risk in that?

*Oh, and the punchline to the joke above… “Don’t pay her!” (Sorry….)